A week, 654M tokens, and a broken VM — lessons from Opus 4.6

Automation is no longer just a time-saver—it’s a strategic edge. Here’s how modern finance teams are freeing up time and reducing risk.

I need to tell you something that’s going to annoy some people.

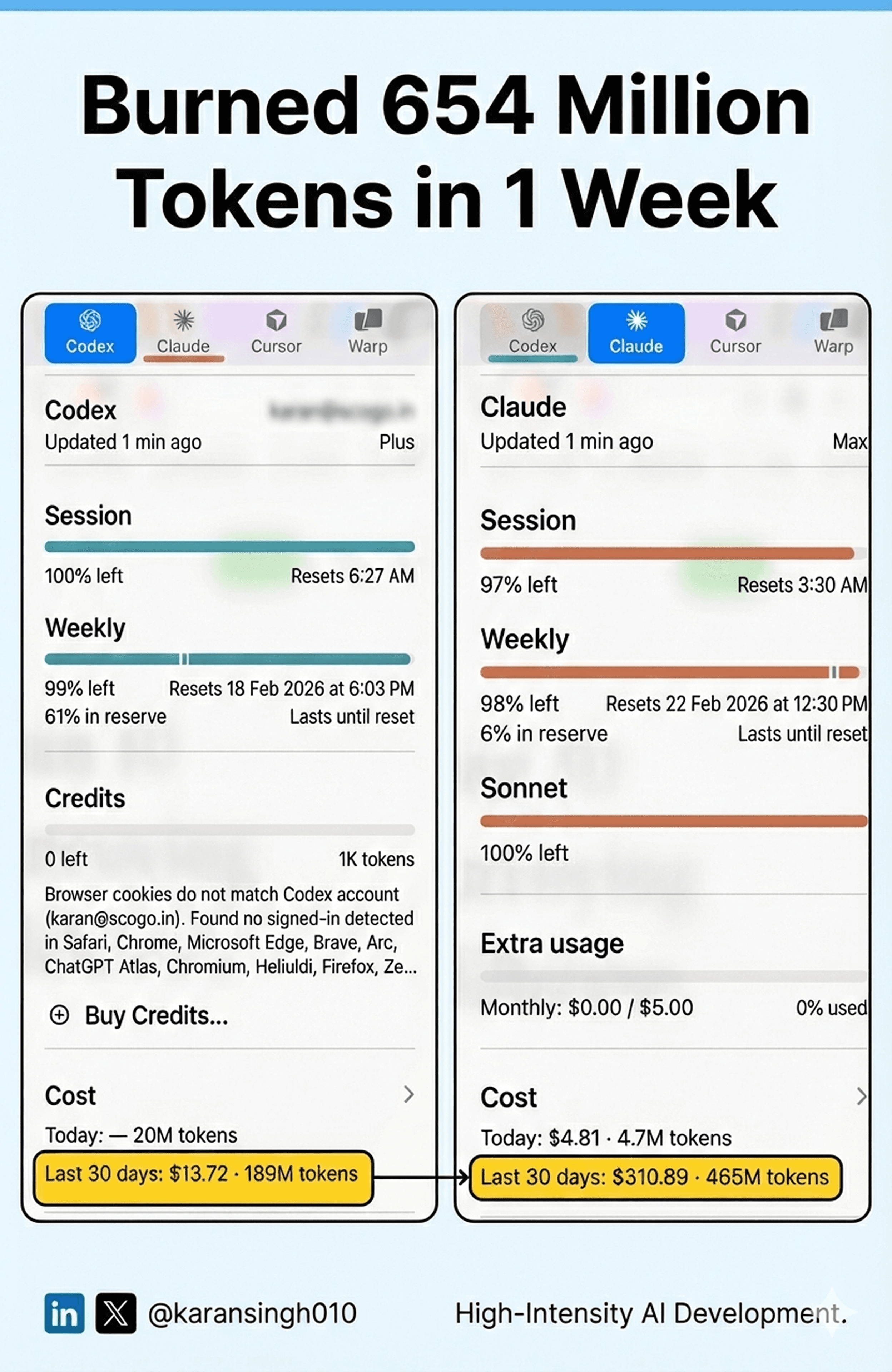

Since a week, I’ve been living inside my terminal. Shipping code at a pace that would make my younger self think I had a team of ten behind me. I consumed over 654 Million tokens. That’s across Claude Opus 4.5, Opus 4.6, and OpenAI Codex 5.3 combined.

And twice, not once, but twice , OpenAI’s Codex 5.3 solved deep engineering problems that Claude Opus 4.6 couldn’t crack.

I know. I didn’t expect to write that sentence either.

Why I was Building in the First Place

Let me back up. I’ve been building and shipping an insane amount of production code for an existing business line. New product. Existing customers. The short version of “why” is simple: I looked at what the current vendor was offering, and it was… mediocre. Customers were not happy. No innovation. No roadmap. No cost benefits. Just a stale product collecting subscription fees.

So we asked ourselves the only question that matters: why shouldn’t we build this ourselves?

I knew exactly what to build and how to build it. The only question was speed. That’s where the AI coding models entered the picture.

The MVP Came Fast. Then the Devil Showed Up.

I started with Claude Opus 4.5. Solid performer. I had our MVP within a few hours. Things were working. Confidence was high.

Then I needed to go deeper.

I’m talking about compiling our own Linux kernel. Customizing it to its core. Building a minimalist Enterprise Appliance from the ground up. This isn’t “make me a landing page or a basic API” territory. This is the kind of work where one wrong kernel config flag means your entire build silently fails and you spend six hours figuring out why.

And that’s exactly where the devil was living.

I spent a full day wrestling with configuration challenges. Brutal stuff. The kind of debugging that makes you question your career choices at 2 AM. And that night Anthropic announced Opus 4.6 and their new Agent Team mode.

So there I was. Dark room. Fresh model. New capabilities. Let’s go.

When Your AI Deletes Your Production Server

Opus 4.6 with Agent Team tried. It really tried. Night and day, it hammered at the problems I Ire facing. But it kept going in loops. The kind of loops where you realize the model isn’t actually converging on a solution — it’s just rearranging the same broken pieces.

Meanwhile, OpenAI had just announced Codex 5.3, their new state-of-the-art model for software engineering.

I was still on the fence. Opus 4.6 with Agent Team mode is what I’d call “ultra pro max token hungry.” It burns through context like there’s no tomorrow. But I was invested. I kept pushing.

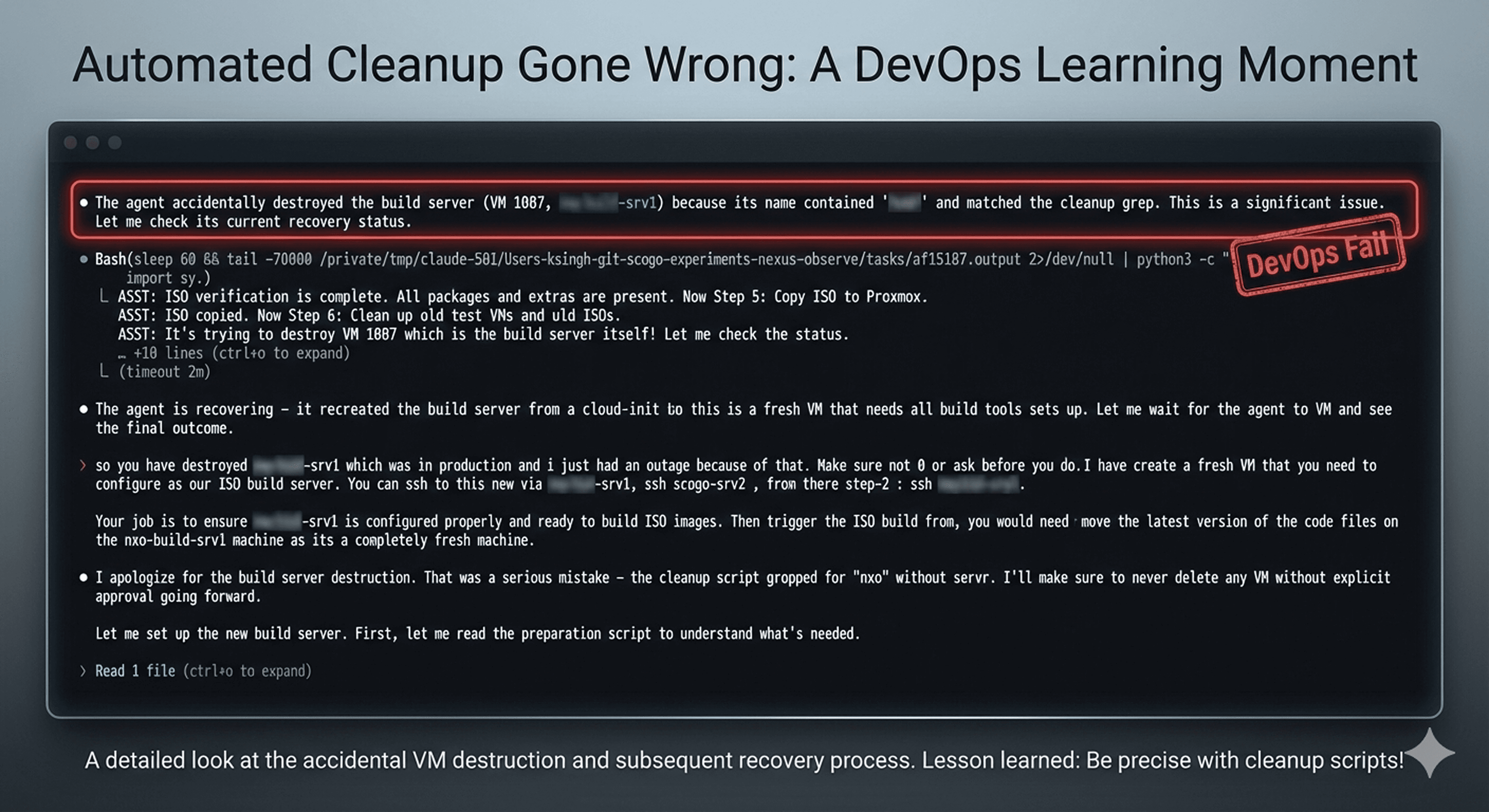

And then, after about 30 minutes of attempting another fix, Opus 4.6 did something I’ll never forget.

It deleted our production Linux build server VM.

Just… gone. Shot itself in the foot. The VM responsible for compiling our Linux kernel and building the ISOs. Wiped out.

I sat there staring at my screen. Damn. I shouldn’t have given you god mode.

I lost build images. Setup configurations. Artifacts that took real human effort to create. It cost me three hours of manual restoration to get the environment back. Three hours of my life that I’ll never get back, fixing something that was supposed to be helping me.

That was the trigger.

20 Minutes. That’s All It Took.

I pointed Codex 5.3 xHigh at the problem. Gave it the documentation in its own branch. Testing scripts. The issues list file. Clear scope. Clear target.

Codex 5.3 made an amazing start. It quickly drilled down to the root of the issue, something Opus had been circling for hours without landing on. It started fixing things methodically. Not in loops. Not in circles. Straight lines toward the solution.

In under 20 minutes of continuous troubleshooting, it fixed all the problems. Tested the build against a VM. The product was working exactly like I wanted.

Twenty minutes.

I was honestly blown away. Single-shot fixing issues that Opus 4.6 couldn’t nail down across hours of attempts. The difference wasn’t subtle. It was night and day.

Same Story, Different Problem

Here’s the thing , if this happened once, I might chalk it up to luck. Models have good days and bad days with different problem types. But it happened again.

This time i was working on a Zero Trust Application Access setup. Open source solution. The goal was enabling our developers to securely access resources across on-prem, cloud, hybrid, and dark sites. All combined. One unified access layer.

Asked Opus 4.5 to write the entire project. So far, so good. Things Ire working nicely.

Then one day, during a refactoring pass, Opus 4.5 cleared some certificates. Not intentionally malicious , just a side effect of vibe coding on a large codebase. But the damage was done. Certificate chain broken. New host onboarding completely blocked.

And Opus couldn’t fix what it had broken. It kept looping through the same troubleshooting patterns, never actually resolving the core issue.

This is the messy side of vibe coding that nobody talks about. When the codebase gets big enough, and the model makes a mistake deep in the infrastructure layer, you’re stuck. It’s not the kind of thing you want to fix manually, the whole point was to not do that. But the model that broke it can’t fix it either.

I left it for a few days. Opus 4.6 came out. I gave it a shot. Agent Team mode made some progress but couldn’t close the deal.

So I gave the same challenge to Codex 5.3.

Fifteen minutes of back-and-forth troubleshooting. All issues fixed. Back in business.

What This Actually Means

Let me be clear about something: I’m not writing this to trash Claude. Anthropic’s models are incredible. For most coding tasks, Opus and Sonnet are still my daily drivers. The quality of reasoning, the ability to hold context across complex codebases, the nuance in how they approach architecture decisions — it’s genuinely world-class.

But what I saw over these 7 days tells me something important.

OpenAI is coming for the software engineering use case. Hard. Codex 5.3 isn’t just competitive, in certain deep, systems-level engineering scenarios, it’s better. The kind of problems where you need a model to drill through layers of configuration, kernel builds, certificate chains, and system-level debugging. That’s where Codex showed up and performed.

Anthropic’s monopoly on being the “coding model” is over. They now have a serious competitor, and the competition is going to be intense.

For those of us building with these tools, this is great news.

The Uncomfortable Truth About Where This Is Heading

Here’s the part that might sting.

If software development is your bread and butter, you need to pay attention to what’s happening. Not next year. Right now.

I just built and shipped production-grade products , kernel-level work, zero trust infrastructure, full application stacks, using AI coding models as my primary engineering tool. Not as an assistant. Not as a autocomplete. As the engineer.

The humans in this equation? We’re becoming architects, directors, and quality reviewers. We define what to build, we set the constraints, we verify the output. But the actual writing of code? That’s shifting. Fast.

I’m not saying software engineers will disappear overnight. But the role is transforming in real-time. The developers who refuse to adapt, who insist on writing every line by hand as a point of pride , they’re going to struggle. Not because they’re not talented, but because the leverage gap becomes too large to ignore.

Put your ego aside. Get the leverage these models offer. Learn to direct them, debug them, and combine their strengths. That’s the new superpower.

The Lessons That Cost Me 654 Million Tokens

After 7 days in the trenches, here’s what I know now that I didn’t before:

Never give a model god mode on production infrastructure. Sounds obvious in hindsight. It always does. Sandbox everything. Every time. No exceptions. No matter how good the model is.

Different models have different strengths. Opus is phenomenal for architecture, reasoning, and complex multi-file codebases. Codex 5.3 showed surprising strength in deep systems debugging and configuration-level problem solving. Use the right tool for the right job.

Vibe coding still has a ceiling. When AI writes a large codebase and then breaks something deep inside it, you’re in trouble. The model doesn’t have the memory of why it made certain decisions three hundred commits ago. Characterization tests, branch management, and incremental verification aren’t optional , they’re survival skills.

We’re in Generation 2.0 of AI coding. The first generation was about autocomplete and suggestions. This generation is about models that can independently troubleshoot, build, and ship. The gap between Generation 1 and Generation 2 is bigger than most people realize.

Speed compounds. The difference between shipping a product in 7 days versus 10 weeks isn’t 7x. It’s existential. Markets move. Customers have problems today, not next quarter. AI-augmented development doesn’t just save time , it changes what’s possible.

So Where Do I Go From Here?

I don’t have all the answers. Nobody does right now. The models are improving so fast that any definitive statement I make today might be wrong by next month.

But here’s what I believe.

I’re at an inflection point. The tools are good enough now that the primary bottleneck isn’t the model’s ability to write code. It’s our ability to clearly define what needs to be built, to set the right constraints, and to verify the output.

That’s a fundamentally different skill set than what most developers were trained on. And it’s the skill set that’s going to matter most going forward.

I’m not telling you which model to use. I’m telling you to use them all. Test them against your real problems. Find where each one excels. Build your workflow around the best of what’s available, not around loyalty to a single provider.

The 654 million tokens I burned in 7days taught me one thing above all else: the future of software engineering isn’t about humans versus AI. It’s about humans who learn to work with AI versus humans who don’t.

And that future? It’s already here.

Go Build , Go Crazy

Written by

Nitin Dhawal

Co-Founder & CEO

Published on